Practical AI in Salesforce: Low-Risk Wins You Can Implement Today

Practical AI in Salesforce: Low-Risk Wins You Can Implement Today

Dan Probert, CTO

There’s no shortage of noise around AI in Salesforce right now. Agentic futures, autonomous decision-making, vast new data architectures — all interesting, all powerful, and all a little overwhelming if you’re responsible for delivering value today rather than pitching tomorrow.

What I want to focus on here is something much simpler: practical, low-risk, low-cost uses of generative AI that organisations can implement right now inside Salesforce, without re-platforming data, rebuilding operating models, or taking unnecessary compliance risks.

In our work at Hyphen8, the most effective entry points for AI adoption share three traits:

• The data already exists

• The output supports a human, rather than replacing them

• The implementation avoids complex new infrastructure

Two areas where this consistently works well for many nonprofits and pubic sector organisations are due diligence and assessment.

Why these use cases work as an AI entry point

In both cases:

• You already have structured and unstructured data in Salesforce

• The work is time-consuming but highly repeatable

• The output is typically a checklist, summary, or written narrative

• A human reviewer is still accountable for the final decision

This makes them ideal for generative AI. We’re not asking AI to decide anything. We’re asking it to read, compare, summarise and draft - exactly what modern language models are good at.

Crucially, this can often be done without introducing Data Cloud, complex model orchestration, or large-scale data re-engineering. In many cases, smaller or older models are sufficient, which also helps reduce cost and environmental impact.

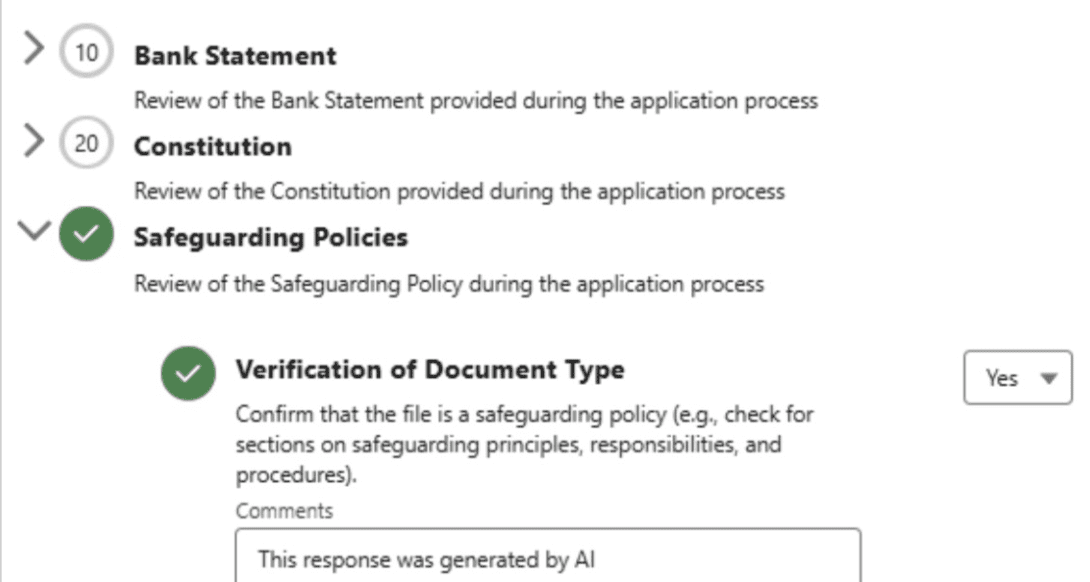

Use Case 1: Due diligence on applications

Due diligence often involves reviewing large numbers of submitted files and application answers, then checking them against programme rules or eligibility criteria.

Instead of replacing the reviewer, AI is used to produce a structured checklist. The output is not a decision, but a clear summary of:

• Missing or incomplete evidence

• Misalignment with eligibility criteria

• Areas needing clarification

A human still reviews the checklist, applies judgement, and signs off.

Use Case 2: Assessment against programme criteria

Assessment stages often require assessors to write narrative evaluations explaining how an application meets (or doesn’t meet) specific programme objectives.

Here, AI is used to draft an assessment using:

• The application content

• The programme criteria

• Guidance on tone and structure

The result is a first draft that a human assessor edits, refines, challenges and approves.

"Salesforce has actually given us more time to be human, we are able to spend more time with our grantees and donors in person" CFO of a Community Foundation

Embedding AI into the application journey

Once these patterns are established internally, the natural next step is embedding AI earlier in the process using Experience Cloud.

For example:

Question: Upload a copy of your constitution.

AI can review the uploaded document in real time and respond with feedback such as:

• This does not appear to be a constitution

• This document is missing expected sections

• This appears to be a valid constitution

Applicants can correct issues before submission, improving overall application quality and reducing downstream rework.

Supporting applications before submission

AI can also review applications before submission against programme criteria and provide feedback on:

• Weak or unclear responses

• Missing evidence

• Poor alignment with programme objectives

This improves fairness, clarity and efficiency without removing human decision-making.

Experimenting and A/B testing by programme

A key advantage of this approach is that it allows controlled experimentation.

Because AI assistance can be enabled at a programme level, organisations can:

• Run the same programme with and without AI support

• Compare processing time, quality and outcomes

• Measure reductions in rework and clarification cycles

• Gather qualitative feedback from reviewers and applicants

This enables true A/B testing, providing real metrics to inform whether, where and how AI should be rolled out more widely.

Rather than committing to organisation-wide change upfront, teams can test, learn and iterate in a controlled, low-risk way.

A sensible way to start and scale

If you’re looking for a defensible way to start with AI in Salesforce - and a clear path to scale - the principles are simple:

• Start where humans already review and write

• Keep AI in a supporting role

• Make outputs transparent and editable

• Embed AI where it reduces friction

• Use programme-level experimentation to guide rollout

At Hyphen8, we believe the most valuable AI is the kind that quietly makes people better at their jobs and processes clearer and fairer for users. Taking this approach, our customers are experiencing huge time-savings and improved user experiences that are fuelling confidence in applying AI to wider use cases.

Get these foundations right, and everything else becomes much easier.